接着第一篇继续学习。

一、数据分类

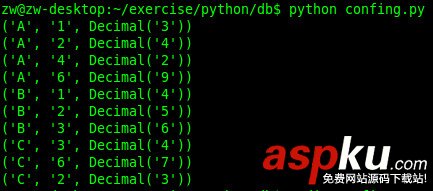

正确数据:id、性别、活动时间三者都有

放在这个文件里file1 = 'ruisi//correct%s-%s.txt' % (startNum, endNum)

数据格式为293001 男 2015-5-1 19:17

- 没有时间:有id、有性别,无活动时间

放这个文件里file2 = 'ruisi//errTime%s-%s.txt' % (startNum, endNum)

数据格式为2566 女 notime

- 用户不存在:该id没有对应的用户

放这个文件里file3 = 'ruisi//notexist%s-%s.txt' % (startNum, endNum)

数据格式为29005 notexist

- 未知性别:有id,但是性别从网页上无法得知(经检查,这种情况也没有活动时间)

放这个文件里 file4 = 'ruisi//unkownsex%s-%s.txt' % (startNum, endNum)

数据格式 221794 unkownsex

- 网络错误:网断了,或者服务器故障,需要对这些id重新检查

放这个文件里 file5 = 'ruisi//httperror%s-%s.txt' % (startNum, endNum)

数据格式 271004 httperror

如何不间断得爬虫信息

- 本项目有一个考虑:是不间断爬取信息,如果因为断网、BBS服务器故障啥的,我的爬虫程序就退出的话。那我们还得从间断的地方继续爬,或者更麻烦的是从头开始爬。

- 所以,我采取的方法是,如果遇到故障,就把这些异常的id记录下来。等一次遍历之后,才对这些异常的id进行重新爬取性别。

- 本文系列(一)给出了一个 getInfo(myurl, seWord),通过给定链接和给定正则表达式爬取信息。

- 这个函数可以用来查看性别的最后活动时间信息。

- 我们再定义一个安全的爬取函数,不会间断程序运行的,这儿用到try except异常处理。

这儿代码试了两次getInfo(myurl, seWord),如果第2次还是抛出异常了,就把这个id保存在file5里面

如果能获取到信息,就返回信息

file5 = 'ruisi//httperror%s-%s.txt' % (startNum, endNum)def safeGet(myid, myurl, seWord): try: return getInfo(myurl, seWord) except: try: return getInfo(myurl, seWord) except: httperrorfile = open(file5, 'a') info = '%d %s/n' % (myid, 'httperror') httperrorfile.write(info) httperrorfile.close() return 'httperror'

依次遍历,获取id从[1,300,000]的用户信息

我们定义一个函数,这儿的思路是获取sex和time,如果有sex,进而继续判断是否有time;如果没sex,判断是否这个用户不存在还是性别无法爬取。

其中要考虑到断网或者BBS服务器故障的情况。

url1 = 'http://rs.xidian.edu.cn/home.php?mod=space&uid=%s'url2 = 'http://rs.xidian.edu.cn/home.php?mod=space&uid=%s&do=profile'def searchWeb(idArr): for id in idArr: sexUrl = url1 % (id) #将%s替换为id timeUrl = url2 % (id) sex = safeGet(id,sexUrl, sexRe) if not sex: #如果sexUrl里面找不到性别,在timeUrl再尝试找一下 sex = safeGet(id,timeUrl, sexRe) time = safeGet(id,timeUrl, timeRe) #如果出现了httperror,需要重新爬取 if (sex is 'httperror') or (time is 'httperror') : pass else: if sex: info = '%d %s' % (id, sex) if time: info = '%s %s/n' % (info, time) wfile = open(file1, 'a') wfile.write(info) wfile.close() else: info = '%s %s/n' % (info, 'notime') errtimefile = open(file2, 'a') errtimefile.write(info) errtimefile.close() else: #这儿是性别是None,然后确定一下是不是用户不存在 #断网的时候加上这个,会导致4个重复httperror #可能用户的性别我们无法知道,他没有填写 notexist = safeGet(id,sexUrl, notexistRe) if notexist is 'httperror': pass else: if notexist: notexistfile = open(file3, 'a') info = '%d %s/n' % (id, 'notexist') notexistfile.write(info) notexistfile.close() else: unkownsexfile = open(file4, 'a') info = '%d %s/n' % (id, 'unkownsex') unkownsexfile.write(info) unkownsexfile.close()

这儿后期检查发现了一个问题

sex = safeGet(id,sexUrl, sexRe) if not sex: sex = safeGet(id,timeUrl, sexRe) time = safeGet(id,timeUrl, timeRe)

这个代码如果断网的时候,调用了3次safeGet,每次调用都会往文本里面同一个id写多次httperror

251538 httperror251538 httperror251538 httperror251538 httperror

多线程爬取信息?

数据统计可以用多线程,因为是独立的多个文本

1、Popen介绍

使用Popen可以自定义标准输入、标准输出和标准错误输出。我在SAP实习的时候,项目组在linux平台下经常使用Popen,可能是因为可以方便重定向输出。

下面这段代码借鉴了以前项目组的实现方法,Popen可以调用系统cmd命令。下面3个communicate()连在一起表示要等这3个线程都结束。

疑惑?

试验了一下,必须3个communicate()紧挨着才能保证3个线程同时开启,最后等待3个线程都结束。

p1=Popen(['python', 'ruisi.py', str(s0),str(s1)],bufsize=10000, stdout=subprocess.PIPE)p2=Popen(['python', 'ruisi.py', str(s1),str(s2)],bufsize=10000, stdout=subprocess.PIPE)p3=Popen(['python', 'ruisi.py', str(s2),str(s3)],bufsize=10000, stdout=subprocess.PIPE)p1.communicate()p2.communicate()p3.communicate()

2、定义一个单线程的爬虫

用法:python ruisi.py <startNum> <endNum>

这段代码就是爬取[startNum, endNum)信息,输出到相应的文本里。它是一个单线程的程序,若要实现多线程的话,在外部调用它的地方实现多线程。

# ruisi.py# coding=utf-8import urllib2, re, sys, threading, time,thread# myurl as 指定链接# seWord as 正则表达式,用unicode表示# 返回根据正则表达式匹配的信息或者Nonedef getInfo(myurl, seWord): headers = { 'User-Agent': 'Mozilla/5.0 (Windows; U; Windows NT 6.1; en-US; rv:1.9.1.6) Gecko/20091201 Firefox/3.5.6' } req = urllib2.Request( url=myurl, headers=headers ) time.sleep(0.3) response = urllib2.urlopen(req) html = response.read() html = unicode(html, 'utf-8') timeMatch = seWord.search(html) if timeMatch: s = timeMatch.groups() return s[0] else: return None#尝试两次getInfo()#第2次失败后,就把这个id标记为httperrordef safeGet(myid, myurl, seWord): try: return getInfo(myurl, seWord) except: try: return getInfo(myurl, seWord) except: httperrorfile = open(file5, 'a') info = '%d %s/n' % (myid, 'httperror') httperrorfile.write(info) httperrorfile.close() return 'httperror'#输出一个 idArr 范围,比如[1,1001)def searchWeb(idArr): for id in idArr: sexUrl = url1 % (id) timeUrl = url2 % (id) sex = safeGet(id,sexUrl, sexRe) if not sex: sex = safeGet(id,timeUrl, sexRe) time = safeGet(id,timeUrl, timeRe) if (sex is 'httperror') or (time is 'httperror') : pass else: if sex: info = '%d %s' % (id, sex) if time: info = '%s %s/n' % (info, time) wfile = open(file1, 'a') wfile.write(info) wfile.close() else: info = '%s %s/n' % (info, 'notime') errtimefile = open(file2, 'a') errtimefile.write(info) errtimefile.close() else: notexist = safeGet(id,sexUrl, notexistRe) if notexist is 'httperror': pass else: if notexist: notexistfile = open(file3, 'a') info = '%d %s/n' % (id, 'notexist') notexistfile.write(info) notexistfile.close() else: unkownsexfile = open(file4, 'a') info = '%d %s/n' % (id, 'unkownsex') unkownsexfile.write(info) unkownsexfile.close()def main(): reload(sys) sys.setdefaultencoding('utf-8') if len(sys.argv) != 3: print 'usage: python ruisi.py <startNum> <endNum>' sys.exit(-1) global sexRe,timeRe,notexistRe,url1,url2,file1,file2,file3,file4,startNum,endNum,file5 startNum=int(sys.argv[1]) endNum=int(sys.argv[2]) sexRe = re.compile(u'em>/u6027/u522b</em>(.*?)</li') timeRe = re.compile(u'em>/u4e0a/u6b21/u6d3b/u52a8/u65f6/u95f4</em>(.*?)</li') notexistRe = re.compile(u'(p>)/u62b1/u6b49/uff0c/u60a8/u6307/u5b9a/u7684/u7528/u6237/u7a7a/u95f4/u4e0d/u5b58/u5728<') url1 = 'http://rs.xidian.edu.cn/home.php?mod=space&uid=%s' url2 = 'http://rs.xidian.edu.cn/home.php?mod=space&uid=%s&do=profile' file1 = '..//newRuisi//correct%s-%s.txt' % (startNum, endNum) file2 = '..//newRuisi//errTime%s-%s.txt' % (startNum, endNum) file3 = '..//newRuisi//notexist%s-%s.txt' % (startNum, endNum) file4 = '..//newRuisi//unkownsex%s-%s.txt' % (startNum, endNum) file5 = '..//newRuisi//httperror%s-%s.txt' % (startNum, endNum) searchWeb(xrange(startNum,endNum)) # numThread = 10 # searchWeb(xrange(endNum)) # total = 0 # for i in xrange(numThread): # data = xrange(1+i,endNum,numThread) # total =+ len(data) # t=threading.Thread(target=searchWeb,args=(data,)) # t.start() # print totalmain() 多线程爬虫

代码

# coding=utf-8from subprocess import Popenimport subprocessimport threading,timestartn = 1endn = 300001step =1000total = (endn - startn + 1 ) /stepISOTIMEFORMAT='%Y-%m-%d %X'#hardcode 3 threads#沒有深究3个线程好还是4或者更多个线程好#输出格式化的年月日时分秒#输出程序的耗时(以秒为单位)for i in xrange(0,total,3): startNumber = startn + step * i startTime = time.clock() s0 = startNumber s1 = startNumber + step s2 = startNumber + step*2 s3 = startNumber + step*3 p1=Popen(['python', 'ruisi.py', str(s0),str(s1)],bufsize=10000, stdout=subprocess.PIPE) p2=Popen(['python', 'ruisi.py', str(s1),str(s2)],bufsize=10000, stdout=subprocess.PIPE) p3=Popen(['python', 'ruisi.py', str(s2),str(s3)],bufsize=10000, stdout=subprocess.PIPE) startftime ='[ '+ time.strftime( ISOTIMEFORMAT, time.localtime() ) + ' ] ' print startftime + '%s - %s download start... ' %(s0, s1) print startftime + '%s - %s download start... ' %(s1, s2) print startftime + '%s - %s download start... ' %(s2, s3) p1.communicate() p2.communicate() p3.communicate() endftime = '[ '+ time.strftime( ISOTIMEFORMAT, time.localtime() ) + ' ] ' print endftime + '%s - %s download end !!! ' %(s0, s1) print endftime + '%s - %s download end !!! ' %(s1, s2) print endftime + '%s - %s download end !!! ' %(s2, s3) endTime = time.clock() print "cost time " + str(endTime - startTime) + " s" time.sleep(5)

这儿是记录时间戳的日志:

"D:/Program Files/Python27/python.exe" E:/pythonProject/webCrawler/sum.py[ 2015-11-23 11:31:15 ] 1 - 1001 download start... [ 2015-11-23 11:31:15 ] 1001 - 2001 download start... [ 2015-11-23 11:31:15 ] 2001 - 3001 download start... [ 2015-11-23 11:53:44 ] 1 - 1001 download end !!! [ 2015-11-23 11:53:44 ] 1001 - 2001 download end !!! [ 2015-11-23 11:53:44 ] 2001 - 3001 download end !!! cost time 1348.99480677 s[ 2015-11-23 11:53:50 ] 3001 - 4001 download start... [ 2015-11-23 11:53:50 ] 4001 - 5001 download start... [ 2015-11-23 11:53:50 ] 5001 - 6001 download start... [ 2015-11-23 12:16:56 ] 3001 - 4001 download end !!! [ 2015-11-23 12:16:56 ] 4001 - 5001 download end !!! [ 2015-11-23 12:16:56 ] 5001 - 6001 download end !!! cost time 1386.06407734 s[ 2015-11-23 12:17:01 ] 6001 - 7001 download start... [ 2015-11-23 12:17:01 ] 7001 - 8001 download start... [ 2015-11-23 12:17:01 ] 8001 - 9001 download start...

上面是多线程的Log记录,从下面可以看出,1000个用户平均需要500s,一个id需要0.5s。500*300/3600 = 41.666666666667小时,大概需要两天的时间。

我们再试验一次单线程爬虫的耗时,记录如下:

"D:/Program Files/Python27/python.exe" E:/pythonProject/webCrawler/sum.py1 - 1001 download start... 1 - 1001 download end !!! cost time 1583.65911889 s1001 - 2001 download start... 1001 - 2001 download end !!! cost time 1342.46874278 s2001 - 3001 download start... 2001 - 3001 download end !!! cost time 1327.10885725 s3001 - 4001 download start...

我们发现一次线程爬取1000个用户耗时的时间也需要1500s,而多线程程序是3*1000个用户耗时1500s。

故多线程确实能比单线程省很多时间。

Note:

在getInfo(myurl, seWord)里有time.sleep(0.3)这样一段代码,是为了防止批判访问BBS,而被BBS拒绝访问。这个0.3s对于上文多线程和单线程的统计时间有影响。

最后附上原始的,没有带时间戳的记录。(加上时间戳,可以知道程序什么时候开始爬虫的,以应对线程卡死情况。)

"D:/Program Files/Python27/python.exe" E:/pythonProject/webCrawler/sum.py1 - 1001 download start... 1001 - 2001 download start... 2001 - 3001 download start... 1 - 1001 download end !!! 1001 - 2001 download end !!! 2001 - 3001 download end !!! cost time 1532.74102812 s3001 - 4001 download start... 4001 - 5001 download start... 5001 - 6001 download start... 3001 - 4001 download end !!! 4001 - 5001 download end !!! 5001 - 6001 download end !!! cost time 2652.01624951 s6001 - 7001 download start... 7001 - 8001 download start... 8001 - 9001 download start... 6001 - 7001 download end !!! 7001 - 8001 download end !!! 8001 - 9001 download end !!! cost time 1880.61513696 s9001 - 10001 download start... 10001 - 11001 download start... 11001 - 12001 download start... 9001 - 10001 download end !!! 10001 - 11001 download end !!! 11001 - 12001 download end !!! cost time 1634.40575553 s12001 - 13001 download start... 13001 - 14001 download start... 14001 - 15001 download start... 12001 - 13001 download end !!! 13001 - 14001 download end !!! 14001 - 15001 download end !!! cost time 1403.62795496 s15001 - 16001 download start... 16001 - 17001 download start... 17001 - 18001 download start... 15001 - 16001 download end !!! 16001 - 17001 download end !!! 17001 - 18001 download end !!! cost time 1271.42177906 s18001 - 19001 download start... 19001 - 20001 download start... 20001 - 21001 download start... 18001 - 19001 download end !!! 19001 - 20001 download end !!! 20001 - 21001 download end !!! cost time 1476.04122024 s21001 - 22001 download start... 22001 - 23001 download start... 23001 - 24001 download start... 21001 - 22001 download end !!! 22001 - 23001 download end !!! 23001 - 24001 download end !!! cost time 1431.37074164 s24001 - 25001 download start... 25001 - 26001 download start... 26001 - 27001 download start... 24001 - 25001 download end !!! 25001 - 26001 download end !!! 26001 - 27001 download end !!! cost time 1411.45186874 s27001 - 28001 download start... 28001 - 29001 download start... 29001 - 30001 download start... 27001 - 28001 download end !!! 28001 - 29001 download end !!! 29001 - 30001 download end !!! cost time 1396.88837788 s30001 - 31001 download start... 31001 - 32001 download start... 32001 - 33001 download start... 30001 - 31001 download end !!! 31001 - 32001 download end !!! 32001 - 33001 download end !!! cost time 1389.01316718 s33001 - 34001 download start... 34001 - 35001 download start... 35001 - 36001 download start... 33001 - 34001 download end !!! 34001 - 35001 download end !!! 35001 - 36001 download end !!! cost time 1318.16040825 s36001 - 37001 download start... 37001 - 38001 download start... 38001 - 39001 download start... 36001 - 37001 download end !!! 37001 - 38001 download end !!! 38001 - 39001 download end !!! cost time 1362.59222822 s39001 - 40001 download start... 40001 - 41001 download start... 41001 - 42001 download start... 39001 - 40001 download end !!! 40001 - 41001 download end !!! 41001 - 42001 download end !!! cost time 1253.62498539 s42001 - 43001 download start... 43001 - 44001 download start... 44001 - 45001 download start... 42001 - 43001 download end !!! 43001 - 44001 download end !!! 44001 - 45001 download end !!! cost time 1313.50461988 s45001 - 46001 download start... 46001 - 47001 download start... 47001 - 48001 download start... 45001 - 46001 download end !!! 46001 - 47001 download end !!! 47001 - 48001 download end !!! cost time 1322.32317331 s48001 - 49001 download start... 49001 - 50001 download start... 50001 - 51001 download start... 48001 - 49001 download end !!! 49001 - 50001 download end !!! 50001 - 51001 download end !!! cost time 1381.58027296 s51001 - 52001 download start... 52001 - 53001 download start... 53001 - 54001 download start... 51001 - 52001 download end !!! 52001 - 53001 download end !!! 53001 - 54001 download end !!! cost time 1357.78699459 s54001 - 55001 download start... 55001 - 56001 download start... 56001 - 57001 download start... 54001 - 55001 download end !!! 55001 - 56001 download end !!! 56001 - 57001 download end !!! cost time 1359.76377246 s57001 - 58001 download start... 58001 - 59001 download start... 59001 - 60001 download start... 57001 - 58001 download end !!! 58001 - 59001 download end !!! 59001 - 60001 download end !!! cost time 1335.47829775 s60001 - 61001 download start... 61001 - 62001 download start... 62001 - 63001 download start... 60001 - 61001 download end !!! 61001 - 62001 download end !!! 62001 - 63001 download end !!! cost time 1354.82727645 s63001 - 64001 download start... 64001 - 65001 download start... 65001 - 66001 download start... 63001 - 64001 download end !!! 64001 - 65001 download end !!! 65001 - 66001 download end !!! cost time 1260.54731607 s66001 - 67001 download start... 67001 - 68001 download start... 68001 - 69001 download start... 66001 - 67001 download end !!! 67001 - 68001 download end !!! 68001 - 69001 download end !!! cost time 1363.58255686 s69001 - 70001 download start... 70001 - 71001 download start... 71001 - 72001 download start... 69001 - 70001 download end !!! 70001 - 71001 download end !!! 71001 - 72001 download end !!! cost time 1354.17163074 s72001 - 73001 download start... 73001 - 74001 download start... 74001 - 75001 download start... 72001 - 73001 download end !!! 73001 - 74001 download end !!! 74001 - 75001 download end !!! cost time 1335.00425259 s75001 - 76001 download start... 76001 - 77001 download start... 77001 - 78001 download start... 75001 - 76001 download end !!! 76001 - 77001 download end !!! 77001 - 78001 download end !!! cost time 1360.44054978 s78001 - 79001 download start... 79001 - 80001 download start... 80001 - 81001 download start... 78001 - 79001 download end !!! 79001 - 80001 download end !!! 80001 - 81001 download end !!! cost time 1369.72662457 s81001 - 82001 download start... 82001 - 83001 download start... 83001 - 84001 download start... 81001 - 82001 download end !!! 82001 - 83001 download end !!! 83001 - 84001 download end !!! cost time 1369.95550676 s84001 - 85001 download start... 85001 - 86001 download start... 86001 - 87001 download start... 84001 - 85001 download end !!! 85001 - 86001 download end !!! 86001 - 87001 download end !!! cost time 1482.53886433 s87001 - 88001 download start... 88001 - 89001 download start... 89001 - 90001 download start...

以上就是关于python实现爬虫统计学校BBS男女比例的第二篇,重点介绍了多线程爬虫,希望对大家的学习有所帮助。