本文实例讲述了Python正则抓取网易新闻的方法。分享给大家供大家参考,具体如下:

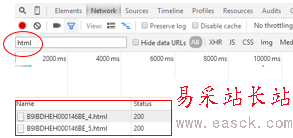

自己写了些关于抓取网易新闻的爬虫,发现其网页源代码与网页的评论根本就对不上,所以,采用了抓包工具得到了其评论的隐藏地址(每个浏览器都有自己的抓包工具,都可以用来分析网站)

如果仔细观察的话就会发现,有一个特殊的,那么这个就是自己想要的了

然后打开链接就可以找到相关的评论内容了。(下图为第一页内容)

接下来就是代码了(也照着大神的改改写写了)。

#coding=utf-8import urllib2import reimport jsonimport timeclass WY(): def __init__(self): self.headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 5.1) AppleWebKit/534.24 (KHTML, like '} self.url='http://comment.news.163.com/data/news3_bbs/df/B9IBDHEH000146BE_1.html' def getpage(self,page): full_url='http://comment.news.163.com/cache/newlist/news3_bbs/B9IBDHEH000146BE_'+str(page)+'.html' return full_url def gethtml(self,page): try: req=urllib2.Request(page,None,self.headers) response = urllib2.urlopen(req) html = response.read() return html except urllib2.URLError,e: if hasattr(e,'reason'): print u"连接失败",e.reason return None #处理字符串 def Process(self,data,page): if page == 1: data=data.replace('var replyData=','') else: data=data.replace('var newPostList=','') reg1=re.compile(" /[<a href=''>") data=reg1.sub(' ',data) reg2=re.compile('<////a>/]') data=reg2.sub('',data) reg3=re.compile('<br>') data=reg3.sub('',data) return data #解析json def dealJSON(self): with open("WY.txt","a") as file: file.write('ID'+'|'+'评论'+'|'+'踩'+'|'+'顶'+'/n') for i in range(1,12): if i == 1: data=self.gethtml(self.url) data=self.Process(data,i)[:-1] value=json.loads(data) file=open('WY.txt','a') for item in value['hotPosts']: try: file.write(item['1']['f'].encode('utf-8')+'|') file.write(item['1']['b'].encode('utf-8')+'|') file.write(item['1']['a'].encode('utf-8')+'|') file.write(item['1']['v'].encode('utf-8')+'/n') except: continue file.close() print '--正在采集%d/12--'%i time.sleep(5) else: page=self.getpage(i) data = self.gethtml(page) data = self.Process(data,i)[:-2] # print data value=json.loads(data) # print value file=open('WY.txt','a') for item in value['newPosts']: try: file.write(item['1']['f'].encode('utf-8')+'|') file.write(item['1']['b'].encode('utf-8')+'|') file.write(item['1']['a'].encode('utf-8')+'|') file.write(item['1']['v'].encode('utf-8')+'/n') except: continue file.close() print '--正在采集%d/12--'%i time.sleep(5)if __name__ == '__main__': WY().dealJSON()

新闻热点

疑难解答