本文实例讲述了Python爬虫框架Scrapy基本用法。分享给大家供大家参考,具体如下:

Xpath

<html><head> <title>标题</title></head><body> <h2>二级标题</h2> <p>爬虫1</p> <p>爬虫2</p></body></html>

在上述html代码中,我要获取h2的内容,我们可以使用以下代码进行获取:

info = response.xpath("/html/body/h2/text()")可以看出/html/body/h2为内容的层次结构,text()则是获取h2标签的内容。//p获取所有p标签。获取带具体属性的标签://标签[@属性="属性值"]

<div class="hide"></div>

获取class为hide的div标签

div[@class="hide"]

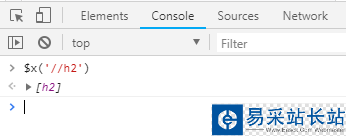

再比如,我们在谷歌Chrome浏览器上的Console界面使用$x['//h2']命令获取页面中的h2元素信息:

xmlfeed模板

创建一个xmlfeed模板的爬虫

scrapy genspider -t xmlfeed abc iqianyue.com

核心代码:

from scrapy.spiders import XMLFeedSpiderclass AbcSpider(XMLFeedSpider): name = 'abc' start_urls = ['http://yum.iqianyue.com/weisuenbook/pyspd/part12/test.xml'] iterator = 'iternodes' # 迭代器,默认为iternodes,是一个基于正则表达式的高性能迭代器。除了iternodes,还有“html”和“xml” itertag = 'person' # 设置从哪个节点(标签)开始迭代 # parse_node会在节点与提供的标签名相符时自动调用 def parse_node(self, response, selector): i = {} xpath = "/person/email/text()" info = selector.xpath(xpath).extract() print(info) return icsvfeed模板

创建一个csvfeed模板的爬虫

scrapy genspider -t csvfeed csvspider iqianyue.com

核心代码

from scrapy.spiders import CSVFeedSpiderclass CsvspiderSpider(CSVFeedSpider): name = 'csvspider' allowed_domains = ['iqianyue.com'] start_urls = ['http://yum.iqianyue.com/weisuenbook/pyspd/part12/mydata.csv'] # headers 主要存放csv文件中包含的用于提取字段的信息列表 headers = ['name', 'sex', 'addr', 'email'] # delimiter 字段之间的间隔 delimiter = ',' def parse_row(self, response, row): i = {} name = row["name"] sex = row["sex"] addr = row["addr"] email = row["email"] print(name,sex,addr,email) #i['url'] = row['url'] #i['name'] = row['name'] #i['description'] = row['description'] return icrawlfeed模板

创建一个crawlfeed模板的爬虫

scrapy genspider -t crawlfeed crawlspider sohu.com

核心代码

class CrawlspiderSpider(CrawlSpider): name = 'crawlspider' allowed_domains = ['sohu.com'] start_urls = ['http://sohu.com/'] rules = ( Rule(LinkExtractor(allow=r'Items/'), callback='parse_item', follow=True), ) def parse_item(self, response): i = {} #i['domain_id'] = response.xpath('//input[@id="sid"]/@value').extract() #i['name'] = response.xpath('//div[@id="name"]').extract() #i['description'] = response.xpath('//div[@id="description"]').extract() return i

新闻热点

疑难解答