一、简单配置,获取单个网页上的内容。

(1)创建scrapy项目

scrapy startproject getblog

(2)编辑 items.py

# -*- coding: utf-8 -*- # Define here the models for your scraped items## See documentation in:# http://doc.scrapy.org/en/latest/topics/items.html from scrapy.item import Item, Field class BlogItem(Item): desc = Field()

(3)在 spiders 文件夹下,创建 blog_spider.py

需要熟悉下xpath选择,感觉跟JQuery选择器差不多,但是不如JQuery选择器用着舒服( w3school教程: http://www.w3school.com.cn/xpath/ )。

# coding=utf-8 from scrapy.spider import Spiderfrom getblog.items import BlogItemfrom scrapy.selector import Selector class BlogSpider(Spider): # 标识名称 name = 'blog' # 起始地址 start_urls = ['http://www.cnblogs.com/'] def parse(self, response): sel = Selector(response) # Xptah 选择器 # 选择所有含有class属性,值为‘post_item'的div 标签内容 # 下面的 第2个div 的 所有内容 sites = sel.xpath('//div[@class="post_item"]/div[2]') items = [] for site in sites: item = BlogItem() # 选取h3标签下,a标签下,的文字内容 ‘text()' item['title'] = site.xpath('h3/a/text()').extract() # 同上,p标签下的 文字内容 ‘text()' item['desc'] = site.xpath('p[@class="post_item_summary"]/text()').extract() items.append(item) return items (4)运行,

(5)输出文件。

在 settings.py 中进行输出配置。

# 输出文件位置FEED_URI = 'blog.xml'# 输出文件格式 可以为 json,xml,csvFEED_FORMAT = 'xml'

输出位置为项目根文件夹下。

二、基本的 -- scrapy.spider.Spider

(1)使用交互shell

dizzy@dizzy-pc:~$ scrapy shell "http://www.baidu.com/"

2014-08-21 04:09:11+0800 [scrapy] INFO: Scrapy 0.24.4 started (bot: scrapybot)2014-08-21 04:09:11+0800 [scrapy] INFO: Optional features available: ssl, http11, django2014-08-21 04:09:11+0800 [scrapy] INFO: Overridden settings: {'LOGSTATS_INTERVAL': 0}2014-08-21 04:09:11+0800 [scrapy] INFO: Enabled extensions: TelnetConsole, CloseSpider, WebService, CoreStats, SpiderState2014-08-21 04:09:11+0800 [scrapy] INFO: Enabled downloader middlewares: HttpAuthMiddleware, DownloadTimeoutMiddleware, UserAgentMiddleware, RetryMiddleware, DefaultHeadersMiddleware, MetaRefreshMiddleware, HttpCompressionMiddleware, RedirectMiddleware, CookiesMiddleware, ChunkedTransferMiddleware, DownloaderStats2014-08-21 04:09:11+0800 [scrapy] INFO: Enabled spider middlewares: HttpErrorMiddleware, OffsiteMiddleware, RefererMiddleware, UrlLengthMiddleware, DepthMiddleware2014-08-21 04:09:11+0800 [scrapy] INFO: Enabled item pipelines: 2014-08-21 04:09:11+0800 [scrapy] DEBUG: Telnet console listening on 127.0.0.1:60242014-08-21 04:09:11+0800 [scrapy] DEBUG: Web service listening on 127.0.0.1:60812014-08-21 04:09:11+0800 [default] INFO: Spider opened2014-08-21 04:09:12+0800 [default] DEBUG: Crawled (200) <GET http://www.baidu.com/> (referer: None)[s] Available Scrapy objects:[s] crawler <scrapy.crawler.Crawler object at 0xa483cec>[s] item {}[s] request <GET http://www.baidu.com/>[s] response <200 http://www.baidu.com/>[s] settings <scrapy.settings.Settings object at 0xa0de78c>[s] spider <Spider 'default' at 0xa78086c>[s] Useful shortcuts:[s] shelp() Shell help (print this help)[s] fetch(req_or_url) Fetch request (or URL) and update local objects[s] view(response) View response in a browser >>> # response.body 返回的所有内容 # response.xpath('//ul/li') 可以测试所有的xpath内容 More important, if you type response.selector you will access a selector object you can use toquery the response, and convenient shortcuts like response.xpath() and response.css() mapping toresponse.selector.xpath() and response.selector.css() 也就是可以很方便的,以交互的形式来查看xpath选择是否正确。之前是用FireFox的F12来选择的,但是并不能保证每次都能正确的选择出内容。

也可使用:

scrapy shell 'http://scrapy.org' --nolog# 参数 --nolog 没有日志

(2)示例

from scrapy import Spiderfrom scrapy_test.items import DmozItem class DmozSpider(Spider): name = 'dmoz' allowed_domains = ['dmoz.org'] start_urls = ['http://www.dmoz.org/Computers/Programming/Languages/Python/Books/', 'http://www.dmoz.org/Computers/Programming/Languages/Python/Resources/,' ''] def parse(self, response): for sel in response.xpath('//ul/li'): item = DmozItem() item['title'] = sel.xpath('a/text()').extract() item['link'] = sel.xpath('a/@href').extract() item['desc'] = sel.xpath('text()').extract() yield item (3)保存文件

可以使用,保存文件。格式可以 json,xml,csv

scrapy crawl -o 'a.json' -t 'json'

(4)使用模板创建spider

scrapy genspider baidu baidu.com # -*- coding: utf-8 -*-import scrapy class BaiduSpider(scrapy.Spider): name = "baidu" allowed_domains = ["baidu.com"] start_urls = ( 'http://www.baidu.com/', ) def parse(self, response): pass

这段先这样吧,记得之前5个的,现在只能想起4个来了. :-(

千万记得随手点下保存按钮。否则很是影响心情的(⊙o⊙)!

三、高级 -- scrapy.contrib.spiders.CrawlSpider

例子

#coding=utf-8from scrapy.contrib.spiders import CrawlSpider, Rulefrom scrapy.contrib.linkextractors import LinkExtractorimport scrapy class TestSpider(CrawlSpider): name = 'test' allowed_domains = ['example.com'] start_urls = ['http://www.example.com/'] rules = ( # 元组 Rule(LinkExtractor(allow=('category/.php', ), deny=('subsection/.php', ))), Rule(LinkExtractor(allow=('item/.php', )), callback='pars_item'), ) def parse_item(self, response): self.log('item page : %s' % response.url) item = scrapy.Item() item['id'] = response.xpath('//td[@id="item_id"]/text()').re('ID:(/d+)') item['name'] = response.xpath('//td[@id="item_name"]/text()').extract() item['description'] = response.xpath('//td[@id="item_description"]/text()').extract() return item 其他的还有 XMLFeedSpider

- class scrapy.contrib.spiders.XMLFeedSpider

- class scrapy.contrib.spiders.CSVFeedSpider

- class scrapy.contrib.spiders.SitemapSpider

四、选择器

>>> from scrapy.selector import Selector >>> from scrapy.http import HtmlResponse

可以灵活的使用 .css() 和 .xpath() 来快速的选取目标数据

关于选择器,需要好好研究一下。xpath() 和 css() ,还要继续熟悉 正则.

当通过class来进行选择的时候,尽量使用 css() 来选择,然后再用 xpath() 来选择元素的熟悉

五、Item Pipeline

Typical use for item pipelines are:

• cleansing HTML data # 清除HTML数据

• validating scraped data (checking that the items contain certain fields) # 验证数据

• checking for duplicates (and dropping them) # 检查重复

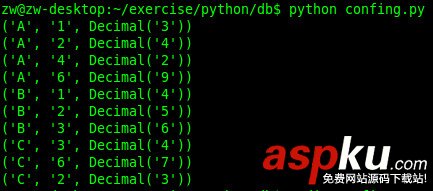

• storing the scraped item in a database # 存入数据库

(1)验证数据

from scrapy.exceptions import DropItem class PricePipeline(object): vat_factor = 1.5 def process_item(self, item, spider): if item['price']: if item['price_excludes_vat']: item['price'] *= self.vat_factor else: raise DropItem('Missing price in %s' % item) (2)写Json文件

import json class JsonWriterPipeline(object): def __init__(self): self.file = open('json.jl', 'wb') def process_item(self, item, spider): line = json.dumps(dict(item)) + '/n' self.file.write(line) return item (3)检查重复

from scrapy.exceptions import DropItem class Duplicates(object): def __init__(self): self.ids_seen = set() def process_item(self, item, spider): if item['id'] in self.ids_seen: raise DropItem('Duplicate item found : %s' % item) else: self.ids_seen.add(item['id']) return item 至于将数据写入数据库,应该也很简单。在 process_item 函数中,将 item 存入进去即可了。