本人想搞个采集微信文章的网站,无奈实在从微信本生无法找到入口链接,网上翻看了大量的资料,发现大家的做法总体来说大同小异,都是以搜狗为入口。下文是笔者整理的一份python爬取微信文章的代码,有兴趣的欢迎阅读

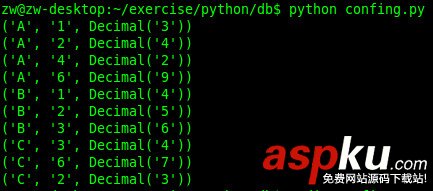

#coding:utf-8author = 'haoning'**#!/usr/bin/env pythonimport timeimport datetimeimport requests**import jsonimport sysreload(sys)sys.setdefaultencoding( "utf-8" )import reimport xml.etree.ElementTree as ETimport os#OPENID = 'oIWsFtyel13ZMva1qltQ3pfejlwU'OPENID = 'oIWsFtw_-W2DaHwRz1oGWzL-wF9M&ext'XML_LIST = []# get current time in millisecondscurrent_milli_time = lambda: int(round(time.time() * 1000))def get_json(pageIndex):global OPENIDthe_headers = {'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_9_5) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/39.0.2171.95 Safari/537.36','Referer': 'http://weixin.sogou.com/gzh?openid={0}'.format(OPENID),'Host': 'weixin.sogou.com'}url = 'http://weixin.sogou.com/gzhjs?cb=sogou.weixin.gzhcb&openid={0}&page={1}&t={2}'.format(OPENID, pageIndex, current_milli_time()) #urlprint(url)response = requests.get(url, headers = the_headers)# TO-DO; check if match the regresponse_text = response.textprint response_textjson_start = response_text.index('sogou.weixin.gzhcb(') + 19json_end = response_text.index(')') - 2json_str = response_text[json_start : json_end] #get json#print(json_str)# convert json_str to json objectjson_obj = json.loads(json_str) #get json obj# print json_obj['totalPages']return json_objdef add_xml(jsonObj):global XML_LISTxmls = jsonObj['items'] #get item#print type(xmls)XML_LIST.extend(xmls) #用新列表扩展原来的列表**[#www.oksousou.com][2]**# ------------ Main ----------------print 'play it :) '# get total pagesdefault_json_obj = get_json(1)total_pages = 0total_items = 0if(default_json_obj):# add the default xmlsadd_xml(default_json_obj)# get the rest itemstotal_pages = default_json_obj['totalPages']total_items = default_json_obj['totalItems']print total_pages# iterate all pagesif(total_pages >= 2): for pageIndex in range(2, total_pages + 1): add_xml(get_json(pageIndex)) #extend print 'load page ' + str(pageIndex) print len(XML_LIST)